Motivation

The handling of digital research data and research software is playing an increasingly important role in all application areas of the natural sciences and engineering. The reason for this is primarily the growing amount of data obtained from experiments and simulations. Without suitable analysis methods, the constantly growing amounts of data can no longer be used in a meaningful way. This is especially true for materials science, as research into new materials is becoming increasingly complex. An important aspect to be able to carry out corresponding data analysis smoothly is the structured storage of research data and the associated metadata. Thus, it is essential to have a concrete infrastructure for data and software management that is specially adapted to the needs of the computational sciences and can be used both centrally and decentrally, as well as internally and publicly, without major hurdles. This infrastructure should support researchers throughout the entire research process – from the creation or use of the simulation software through the structured storage and exchange of data for data analysis to the publication of the data.

Objective

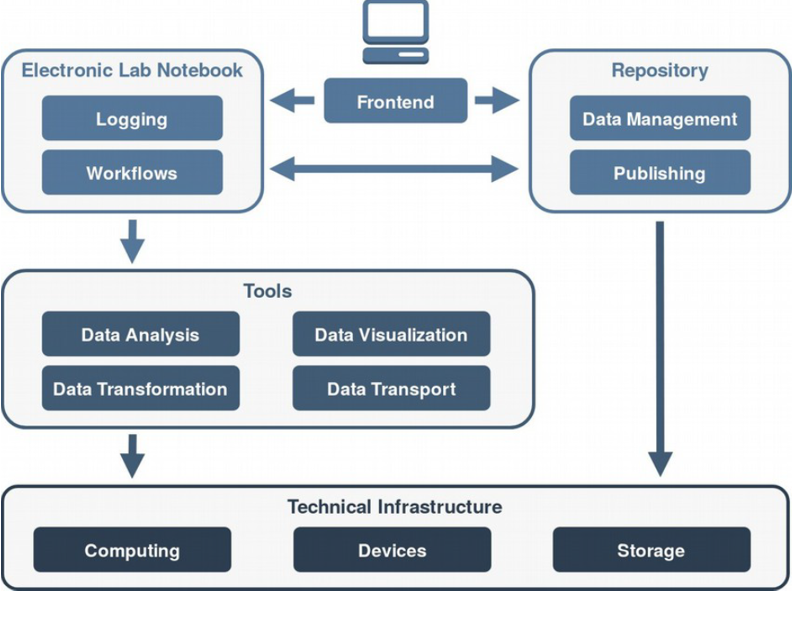

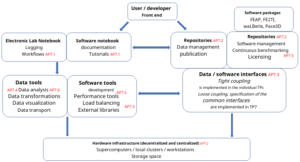

The main objective of the sub-project 7 (SP7) is the bundling and coordination of competencies in the areas of HPC software engineering, sustainable data management and efficient data analysis. SP7 aims to create a common infrastructure for the efficient evaluation and sustainable handling of large amounts of data and software in the field of scientific computing, which can also be used across locations and is adapted to the special needs of the research group. It is supposed to

- establish the software interoperability between the subprojects,

- guarantee the sustainability of the software,

- ensure scalability and performance and

- facilitate the portability to new computer architectures.

For this purpose, methods and interfaces that enable the exchange of multiphysical fields across multiple scales, the derivation of boundary conditions and effective parameters from this, and the uniform in-situ evaluation of experiments and simulations are developed and implemented, on the one hand. On the other hand, techniques and approaches that enable a short- and medium-term storage of data and software in the workflow as well as a long-term archiving of data and software for the publication and sustainable use of project results are examined and implemented.

Another part of the infrastructure to be created is a common software repository for continuous integration and continuous benchmarking development on different platforms and high-performance computers. In addition, cross-subproject know-how for the development of functional and non-functional tests of the software is to be brought in. The allocation of computing resources should also be coordinated centrally and shared software should be made available in a uniform framework so as to avoid conflicts between different versions of shared software.

Work program

The work program includes 6 research phases. In the first phase are defined common standards for software, documentation and general data interfaces. In the second phase, the technical infrastructure is created. In phase 3 numerical data and software interfaces for the transfer of experimental data between the subprojects are implemented. In-situ analysis routines are created in phase 4. In the subsequent phase 5, continuous integration and continuous benchmarking are carried out and in phase 6 follows the development of data analysis methods to be used across the subprojects.

Sub-project management

Prof. Dr. rer. nat. Britta Nestler

Hochschule Karlsruhe – Technik und Wirtschaft (HsKA)

Institute for Digital Materials Research (IDM)

- E-Mail: britta.nestler@kit.edu

Dr.-Ing. Michael Selzer

Hochschule Karlsruhe – Technik und Wirtschaft (HsKA)

Institute for Digital Materials Research (IDM)

- E-Mail: michael.selzer@kit.edu

Prof. Dr.-Ing. Harald Köstler

Friedrich-Alexander-Universität Erlangen-Nürnberg (FAU)

Chair of Computer Science 10 - System Simulation (LSS)

Prof. Dr. rer. nat. Ulrich Rüde

Friedrich-Alexander-Universität Erlangen-Nürnberg (FAU)

Chair of Computer Science 10 - System Simulation (LSS)

- E-Mail: ulrich.ruede@fau.de

Sub-project researcher

Christoph Alt, M.Sc.

Friedrich-Alexander-Universität Erlangen-Nürnberg (FAU)

Chair of Computer Science 10 - System Simulation (LSS)

- E-Mail: christoph.alt@fau.de